Self-Driving Car Accidents in San Diego

Many vehicles on the road today have driver assistance technologies somewhere between simple proximity alerts to full self-driving capability. Self-driving capability is still in development, but companies like Cruise and Waymo are already using driverless cars on California roads.

What is a self-driving car?

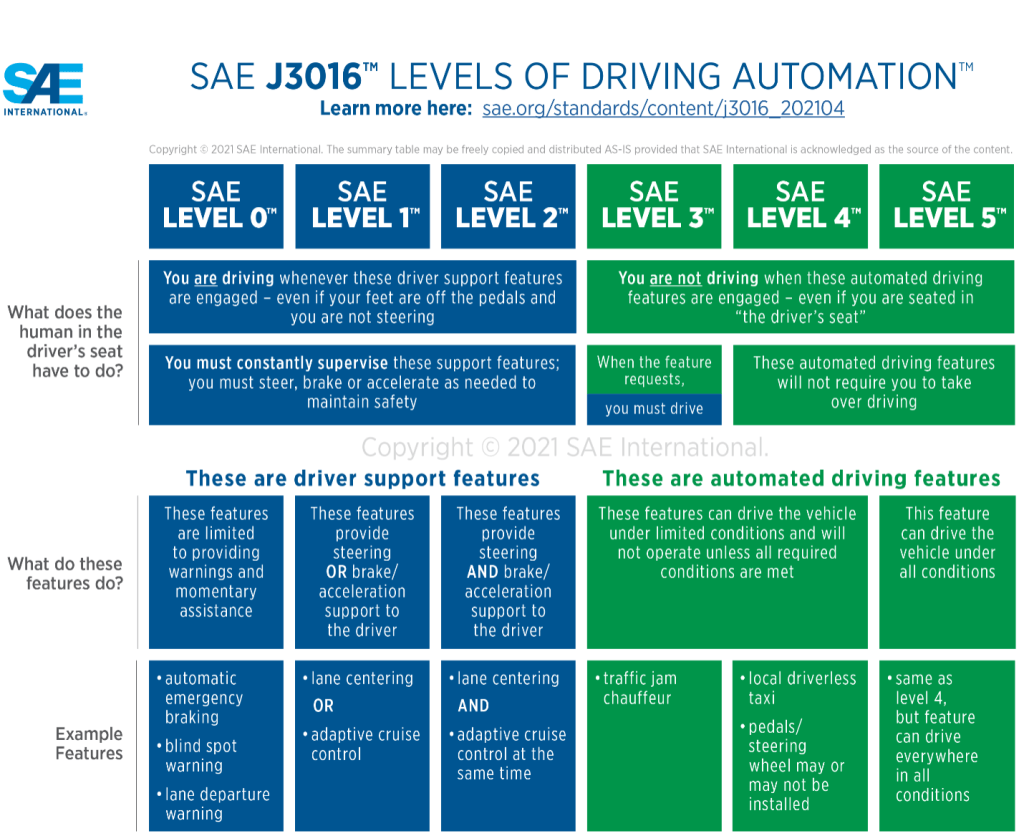

Self-driving cars are computerized vehicles that drive with different degrees of automation. Six different levels of automation classify self-driving cars:

Level 0: Momentary Driver Assistance. The driver is always in complete control of the vehicle. The system provides only passive support, like proximity alerts and blind spot warnings.

Level 1: Driver Assistance. The driver is fully responsible for driving while the system provides continuous assistance with speed OR with steering. The system does not give speed and steering assistance at the same time.

Level 2: Additional driver assistance. The driver is fully responsible for driving while the system provides continuous assistance with speed AND steering simultaneously. Level 2 offers lane centering and adaptive cruise control at the same time.

Level 3: Conditional Automation. The system handles all aspects of driving while the driver remains available to take over driving if the system cannot operate. You are not driving even though you are in the driver’s seat.

Level 4: High Automation. The system is fully responsible for driving tasks. A human driver is not needed to drive the vehicle. Only for use in limited-service areas. Level 3 and Level 4 differ because Level 3 is only self-driving under certain circumstances, and the driver must be ready to take over when prompted.

Level 5: Full Automation: The system is entirely responsible for driving tasks under all conditions and roadways. A human driver is not needed to drive the vehicle.

Are self-driving cars legal?

The legality of self-driving cars varies based on the level of automation and jurisdiction. Low-level automated features like blind spot warnings are legal everywhere in California and do not require special permits. High-level automated vehicles like driverless cars, however, require special permits and can only operate in limited-service areas.

Level 0-Level 2: Cars with limited driver assistance, like cruise control and antilock brakes, have been on the road for decades. More recent technology like proximity alerts and seatbelt warnings have existed for many years. However, the technology on the streets today is far more advanced and includes automated lane centering and acceleration control.

Level 3: Tesla, Mercedes, and General Motors already sell cars with Level 3 self-driving technology. The vehicle fully drives itself, while the driver is available to take over in case of an emergency. Thus, fully self-driving cars are already available to everyday drivers in California. No permit is necessary to drive one. Self-driving delivery trucks are legal if they are under 10,000 pounds and meet all other conditions. Self-driving large trucks and semis are still not approved.

Level 4-Level 5: California allows companies to test fully automated, driverless cars on public roads if they meet certain conditions. The conditions include obtaining a testing permit, maintaining insurance coverage, submitting disengagement and accident reports, and complying with other regulations.

California approved several companies to test driverless vehicles on the road. Google-owned Waymo and General Motors’ Cruise are already testing Level 4 fully self-driving cars on the streets of San Francisco. These vehicles are fully automated and do not have a driver. Waymo and Cruise have apps where you can sign up to take test rides. Currently, Level 4 automation is in use only in robotaxis and not privately-owned vehicles.

How safe are self-driving cars?

Human errors like speeding, distracted driving, drunk driving, and driver fatigue cause most accidents. As such, most accidents are entirely avoidable. Thus, advocates of self-driving cars say that advanced technology will eliminate human error and make the roads much safer. But is this the case?

Advantages and safety features

Computer-driven cars can eliminate human mistakes like driving while distracted, tired, or intoxicated because they have advanced sensors and cameras that allow them, in theory, to process more information about the driving environment than a human can. Thus, computer-driven cars may monitor blind spots better than humans and have superior night vision. They also have the potential to improve the mobility of seniors, people with disabilities, and others who cannot easily drive.

Challenges and concerns

While self-driving cars have potential benefits, there are also many safety concerns. For one, computers lack common sense. For example, if a human driver sees a ball roll into the street, they know to watch out for a child running after it. By contrast, a computer may recognize a round object but not recognize a ball nor anticipate a child running after it.

Computers do not have real-world knowledge. Car companies can only program computers for what they can anticipate, and there is no way to anticipate every situation that may occur on the road. Thus, self-driving cars may struggle to react to unpredictable situations and human behaviors.

Also, questions remain about how self-driving vehicles will handle complex traffic scenarios like construction zones and emergency response scenes. There have already been reports of automated vehicles interfering with emergency responses from fire trucks, ambulances, and police.

Moreover, automated systems have proven vulnerable to hacking, terrorism, and cyberattacks which could have devastating consequences if exploited.

The National Highway Traffic Safety Administration (NHTSA) is probing the safety of self-driving technology. In 2021, the NHTSA opened an investigation into a series of crashes involving self-driving cars. Since 2016, the NHTSA has opened 38 investigations into Tesla crashes that involved automated driving software. There were nineteen reported deaths from those accidents.

Extensive testing, research, and improvements must be made before self-driving cars are consistently safer than humans in all driving conditions. Self-driving cars have already caused fatal accidents, and we can expect more of them as the technology and regulatory framework evolves.

Who’s liable for self-driving car accidents?

Suppose there is no driver in the vehicle. In that case, the vehicle’s maker will likely be liable for a crash. However, semi-autonomous cars require humans and machines to work collaboratively to operate the vehicle. So, who is responsible in the case of an accident – human or machine?

Human driver liability

Suppose an accident happens while a human driver and autonomous features are engaged simultaneously. In that case, the driver and car manufacturer will likely dispute who is at fault, and the cases may prove challenging. You can expect both the driver and car maker to deny liability and blame others for the accident. Proving fault in such cases will involve an extensive investigation that requires the skill of an experienced lawyer.

Car maker liability

Some self-driving car makers, like Tesla, claim that the driver is responsible for accidents. This view is wrong, as product liability and comparative fault laws offer pathways to liability for the car maker. Depending on what caused the accident, victims may have claims against both the driver and the carmaker.

Other self-driving car makers, like Volvo and Mercedes Benz, have reportedly assumed responsibility for accidents caused by their automated systems – meaning they will pay for the injuries when their systems cause accidents. However, car makers will not be eager to pay out claims, and those injured will still have to fight tooth and nail to receive a fair settlement. Furthermore, the payouts in such cases will likely come from insurance companies, and one thing remains certain: getting fair compensation from an insurance company will remain as difficult as ever.

Car part manufacturer liability

Suppose a malfunction of one of the car’s components, such as computer software, causes an accident. In that case, the maker of the component, such as the software company, is liable. Self-driving cars operate with computers and computer software, which must function correctly to drive safely. A malfunction by the car’s computer or other components can give rise to product liability for the maker of the failed car part.

Car owner liability

Under certain circumstances, the car’s owner may be responsible for the accident, even if the owner wasn’t in the vehicle. For example, the owner is liable for accidents if the owner permits the driver to drive the car. Suppose the driver is a commercial driver, like a delivery truck or taxi driver. In that case, the driver’s company shares liability for the driver’s actions.

Government liability

The government is responsible for maintaining roads in a safe condition. Thus, the government may be liable for accidents caused by unsafe road conditions. Hazardous road conditions include inadequate signage, potholes, road defects, poorly designed intersections, and improper traffic control (such as near construction zones).

False-advertising

California recently enacted a law at the start of 2023 expressly forbidding automakers and sellers from marketing partially automated cars as fully autonomous.

Tesla calls its systems ‘’Autopilot’’ and ’‘Full Self-Driving,” even though they are not fully autonomous and are considered SAE Level 2. The vehicles cannot drive themselves, and the driver must always be ready to intervene. As such, Tesla may be running afoul of California marketing laws and exposing itself to false advertising liability.

Indeed, the U.S. Justice Department is investigating Tesla’s claims about self-driving. The criminal probe is potentially serious because it could result in criminal charges against the company or individual executives. The investigation could also result in civil sanctions for the company. Prosecutors are investigating whether Tesla misled consumers, investors, and regulators by making false or overblown claims about its driver assistance technology.

Furthermore, the California DMV accused Tesla of falsely advertising Autopilot and Full Self Driving capabilities. The DMV complained that Tesla had misled customers with advertising that overstated how well the driver assistance programs worked. The DMV seeks remedies, including suspending Tesla’s license to sell cars in California and making the company pay restitution to drivers. This would be a significant blow for Tesla as California is its largest US market.

By contrast, Mercedes calls its self-driving system ‘’Drive Pilot,’’ and GM calls its’ system ‘’SuperCruise.’’

What can victims recover?

Suppose you were injured by a self-driving car. In that case, you can sue those responsible for past and future medical expenses, physical pain and suffering, emotional distress, mental anguish, lost enjoyment of life, lost wages and benefits, lost earning capacity, vehicle repairs and replacement, and other damages.

If a self-driving car kills someone, the victim’s family may be eligible to recover for wrongful death. Such claims may include loss of financial support, medical expenses related to the accident, funeral costs, lost companionship, pain and suffering, and other damages.

How do self-driving cars affect insurance rates?

Car insurance premiums are primarily based on a driver’s driving history, age, and vehicle value. Safer drivers pay less than drivers with a lot of tickets or accidents. So does a self-driving car result in lower insurance rates?

The answer is no. In fact, self-driving cars are likely to have higher insurance premiums because the cost of the vehicle is higher than other cars. More expensive cars mean greater potential for loss and higher insurance costs. Moreover, technologically advanced cars are more costly to repair because their components cost more and require specialists to perform the repairs. Thus, the insurance costs will be higher due to higher repair costs. Do not expect self-driving cars to lower insurance premiums any time soon.

The future of self-driving cars

Owning a driverless car in the next ten years is unlikely because it will still be too expensive for most people. However, some estimates predict that driverless cars will be a $300-400 billion industry by 2035. The adoption of self-driving cars will likely increase as technology advances and the regulatory framework develops. Nonetheless, there will probably be challenges and hurdles as regulators must carefully balance safety standards, liability issues, data privacy, insurance regulations, and cybersecurity concerns.

How we can help

Suppose you were injured by a self-driving car. In that case, you should expect those responsible to do everything possible to avoid liability. The best way to protect your legal rights and maximize your claim is to hire a proven personal injury lawyer as soon as possible following the accident.

Depending on the circumstances of the case, it is likely that an insurance company will be involved. The insurance company’s goal is not to offer you a fair settlement. They aim to settle your case quickly for as little money as possible. You will need an experienced personal injury lawyer to receive anything close to the total value of your claim.

Insurance companies pay more to the clients of lawyers who have a proven track record of winning personal injury trials. We have the track record, skills, experience, and resources to take on the insurance companies and achieve the best possible result in your case.

We are a small law firm. Larger firms farm their work out to less experienced attorneys. When you hire us, you will have one of the best personal injury attorneys in San Diego directly handling your case. We will handle the legal aspects of your case so you can focus on your recovery.

Contact us today to schedule a complimentary strategy session. You will have the opportunity to meet with one of the city’s top lawyers to discuss your case and learn legal strategies to achieve the result you want.

Links

National Highway Traffic Safety Administration – Levels of Automation

California Department of Motor Vehicles – Article 3.7 Testing of Autonomous Vehicles

California Department of Motor Vehicles – Autonomous Vehicle Testing Permit Holders

National Highway Traffic Safety Administration – Standing General Order on Crash Reporting

Judicial Council of California – Civil Jury Instruction No. 405 – Comparative Fault of Plaintiff

Judicial Council of California – Civil Jury Instruction No. 720. Motor Vehicle Owner Liability – Permissive Use of Vehicle

Judicial Council of California – Civil Jury Instruction No. 3701. Tort Liability Against Principal – Essential Factual Elements

California Legislative Information – Senate Bill No. 1398

National Highway Traffic Safety Administration – Part 573 Safety Recall Report